**Multiresolution Tree Networks for 3D Point Cloud Processing**

[Matheus Gadelha](http://mgadelha.me), [Rui Wang](https://people.cs.umass.edu/~ruiwang/) and [Subhransu Maji](http://people.cs.umass.edu/~smaji/)

_University of Massachusetts - Amherst_

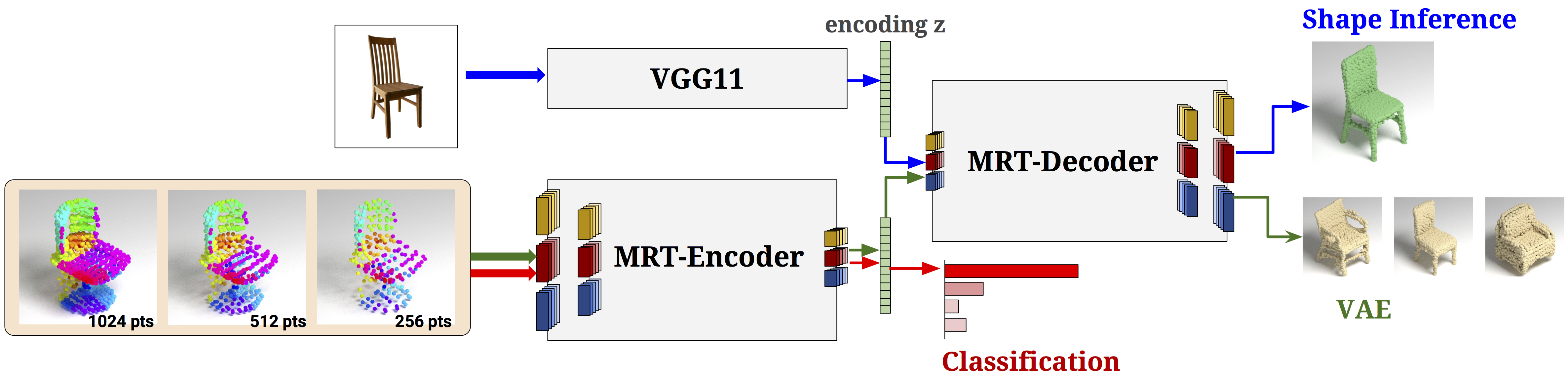

We present multiresolution tree-structured networks to process point clouds for 3D shape understanding and generation tasks.

Our network represents a 3D shape as a set of locality-preserving 1D ordered list of points at multiple resolutions.

This allows efficient feed-forward processing through 1D convolutions, coarse-to-fine analysis through a multi-grid architecture,

and it leads to faster convergence and small memory footprint during training.

The proposed tree-structured encoders can be used to classify shapes and outperform existing point-based architectures on shape classification benchmarks,

while tree-structured decoders can be used for generating point clouds directly and they outperform existing approaches

for image-to-shape inference tasks learned using the ShapeNet dataset.

Our model also allows unsupervised learning of point-cloud based shapes by using a variational autoencoder, leading to higher-quality generated shapes.

[**ArXiv paper**](https://arxiv.org/abs/1807.03520) | [**Code**](https://github.com/matheusgadelha/MRTNet)

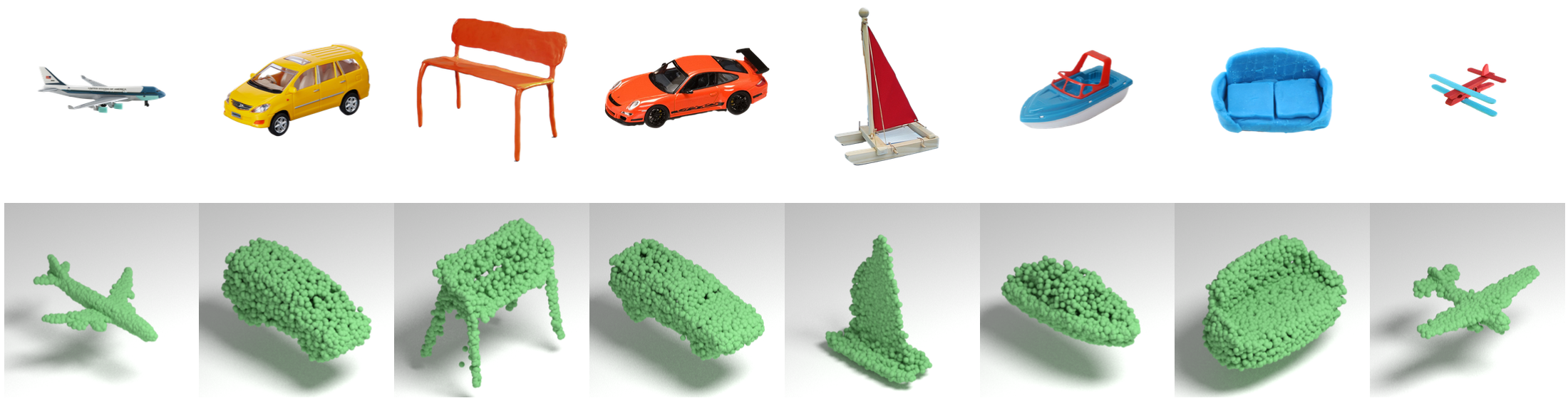

Shape Reconstruction

==========================================================================================

Shapes generated by applying MRTNet on Inernet photos of furnitures and toys. MRTNet

is trained on the 13 categories of ShapeNet renderings dataset. Network is capable

of generating detailed shapes from real photos, even though it is trained only on rendered images

using simple shading models.

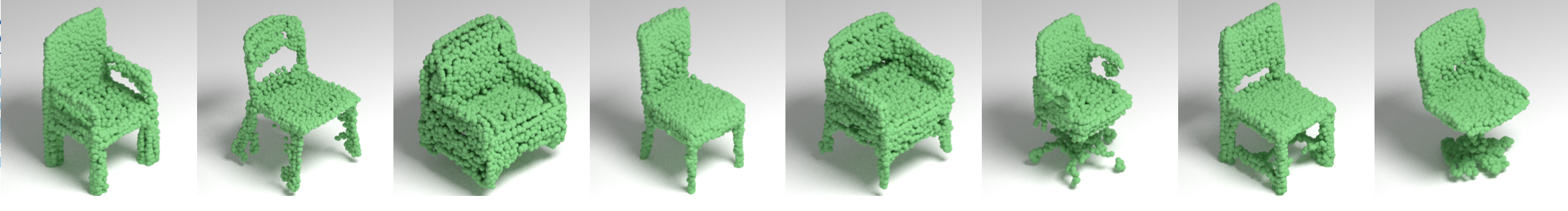

Point Cloud VAE

==========================================================================================

Results are generated by randomly sampling the encoding. MR-VAE is able to preserve shape details

much better than a single resolution model. More details in the paper.

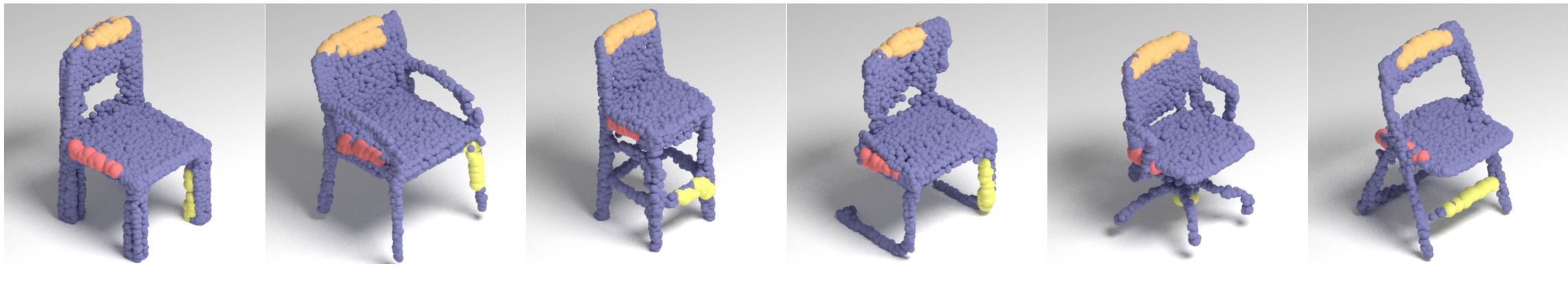

Point Correspondence

==========================================================================================

We picked three index ranges (indicated by three colors) from one example chair, and then color coded points in

every shape that fall into these three ranges. Images show that the network learned to

generate shapes with consistent point ordering.

Shape Interpolation

==========================================================================================

Citation

==========================================================================================

```

@inProceedings{mrt18,

title={Multiresolution Tree Networks for 3D Point Cloud Processing},

author = {Matheus Gadelha and Rui Wang and Subhransu Maji},

booktitle={ECCV},

year={2018}

}

```